Abstracts Aren't Always Accurate

We should read studies fully instead of simply relying on abstracts, especially since abstracts tend to be misleading

Last year, the blogger Sean Last debated the Twitch streamer STRDST on systemic racism (debate has since been deleted). Near the end of the debate, STRDST noted how a study Sean used did not agree with him because the abstract noted something different. From there, both parties argued on the validity of abstracts, with STRDST noting that Sean thinks he knows more than the researchers. Due to this, I decided to see how accurate abstracts are and if we can trust what they say without reading the full report on our own.

Looking at biomedical research, Li et al. (2017) analyzed 17 studies that met their eligibility criteria. When looking at the level of inconsistency between what’s said in the paper and the abstract, the level of inconsistency ranged from 4% to 78%, with the average being 39%. In the studies that differentiated major from minor inconsistencies, the level of major inconsistencies ranged from 5% to 45% with an average level of 19%. According to the authors,

All the included studies concluded that abstracts were frequently inconsistently reported, and that efforts were needed to improve abstract reporting in primary biomedical research

In 9 of their studies that looked at 869 abstracts, conclusions in the abstract were inconsistent with what was said in the full report, with the range being from 15% to 35%. 17% made strong claims that were not reflected in the full report. Also looking at the biomedical field, Boutron and Ravaud (2018) give a wider discussion on previous studies that checked to see if researchers have misreported results, methods, selectively reported their outcomes and methods, ignored or understated results that contradicted or counterbalanced their original hypothesis, misreported figures and results, misinterpreted their results, and other types of spins.

Turning to psychology and psychiatry, Jellison et al. (2019) looked at RCT studies published in JAMA Psychiatry, American Journal of Psychiatry, Journal of Child Psychology and Psychiatry, Psychological Medicine, and the British Journal of Psychiatry and Journal of the American Academy of Child and Adolescent Psychiatry published between 2012 and 2017 and checked to see if authors “spinned” their data. Evidence of spin consisted of authors focusing on statistically significant results, interpreted statistically insignificant results as equivalent or non-inferior, using rhetoric in their interpretation of insignificant results, or claimed benefits from an intervention when the results were not statistically significant.

In their sample of 116 papers, spin was found in 56% of papers. 2% of papers had spins in their titles, 21% had spins in their abstract result sections and 49% had spins in their abstract conclusion sections. 15% of papers had spins in both their results and conclusion section.

When talking about previous studies, it’s remarked that,

Lazarus et al identified evidence of spin in 84% of abstracts of non-randomised trials of therapeutic interventions.(17) In a similar survey of RCTs in robotic colorectal surgery, Patel et al found that 82% of trials contained spin in the abstract or conclusion section.(18) Lastly, in a study evaluating only misleading primary outcome reporting, Mathieu et al found that 23% of rheumatology trials had conclusions that disagreed with the results of the abstract.(19)

Pitkin, Branagan, and Burmeister (1999) looked at 104 random studies appearing in Annals of Internal Medicine, BMJ, JAMA, Lancet, and New England Journal of Medicine between July 1996 and June 1997. The researchers also looked at all articles published between July 1996 and August 15, 1997, in the Canadian Medical Association Journal. 1 of 3 examiners then identified a piece of information in the abstract and then attempted to find its source in the full report. Two types of inconsistencies were sought: (1) data given differently in the abstract and the body and; (2) data given in the abstract but not the full report. The range of inconsistencies in the abstracts ranged from 18% to 68%, with an average of 43%. 24% of papers had both inconsistencies, with (2) being the most common.

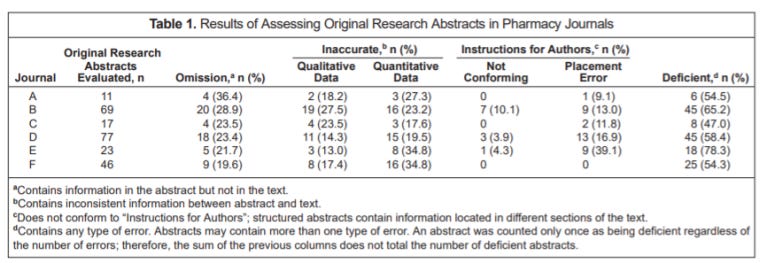

Ward, Kendrach, and Price (2004) looked at 243 abstracts published between June 2001 and May 2002 in the American Journal of Health-System Pharmacy, The Annals of Pharmacotherapy, The Consultant Pharmacist, Hospital Pharmacy, Journal of the American Pharmacists Association, and Pharmacotherapy: The Journal of Human Pharmacology and Drug Therapy. The abstract was noted as containing an error if “data presented in the abstract were omitted from the article text, quantitative data were misrepresented, or the meaning of qualitative data varied from intended meaning in the text.”

24.7% of abstracts looked at contained omissions, 25.1% contained quantitative inaccuracies, 19.3% contained qualitative inaccuracies, 33.3% contained one error (defined as omission or inaccuracy), and 15.6% contained 2 errors. 60.5% of abstracts contained at least one deficiency.

24.7% of abstracts looked at contained omissions, 25.1% contained quantitative inaccuracies, 19.3% contained qualitative inaccuracies, 33.3% contained one error (defined as omission or inaccuracy), and 15.6% contained 2 errors. 60.5% of abstracts contained at least one deficiency.

Looking at otolaryngology journals, specifically Otolaryngology-Head and Neck Surgery, Laryngoscope, Archives of Otolaryngology-Head and Neck Surgery, and Annals of Otology, Rhinology and Laryngology, McCoul et al. (2010) looked at the abstract of 418 articles and judged their abstract quality based on their criteria.

While the majority of studies provided information about the research design, sample size, source of the data, and quantitative results, 91% left out limitations, 79% left out geographical locations, 75% left out confidence intervals, 6% left out dropouts or losses, 44% left out harms and adverse effects. Readers of these journals may come out with biased or wrong conclusions if they only read the abstract of a study.

Siebers (2001) looked at articles published in Clinical Chemistry over 6 months from January 2000. Data in the abstract was checked against the data in the article. Inconsistencies were classified as (1) data in the abstract being different from the data presented in the full report or; (2) the absence from the article of data presented in the abstract. Of the 87 articles looked at, 23% contained data that was reported in the abstract that was inconsistent with what was found in the full report or absent from it. 2/3s of the inconsistencies were related to data in the abstract differing from what was reported in the full paper, whereas 1/3 of inconsistencies related to data in the abstract were not being given in the article.

Looking at 40 RCT papers published in Spine, The Spine Journal, and Journal of Spinal Disorders and Techniques during a 10-year period (2001–2010), Jeff et al. (2014) classified a report as having inconsistencies if their abstract contained data that were either inconsistent with the manuscript or if they failed to include important findings from the manuscript.

75% of papers looked at had at least 1 inconsistency. 37.5% of abstracts did not classify their method of randomization, and of those which did, only 28% were considered unacceptable, the primary outcome was only reported in 22.5% of abstracts, statistically significant effects were only reported in 60% of abstracts, and pertinent negative effects weren’t reported in 40% of abstracts.

Looking at research published in Nature, Park, Peacey, and Munafo (2014) found that abstracts overstate or overexaggerate their research findings and often contain claims that aren’t relevant to their underlying research. According to the authors, there is “a mismatch between the claims made in the abstracts, and the strength of evidence for those claims based on a neutral analysis of the data, consistent with the occurrence of herding.”

In conclusion, we should not expect abstracts to give us accurate information about a report, even if these abstracts are written by researchers. Not only can they be misleading, but readers can come out with a biased or false conclusion based on studies’ abstract. We should read the papers ourselves, examine the data ourselves (assuming we can do it accurately), and not rely on the words seen in an abstract.